Mar 29, 2026

MIT and PoliMi Advance AI’s Power to Explain Its Own Decisions

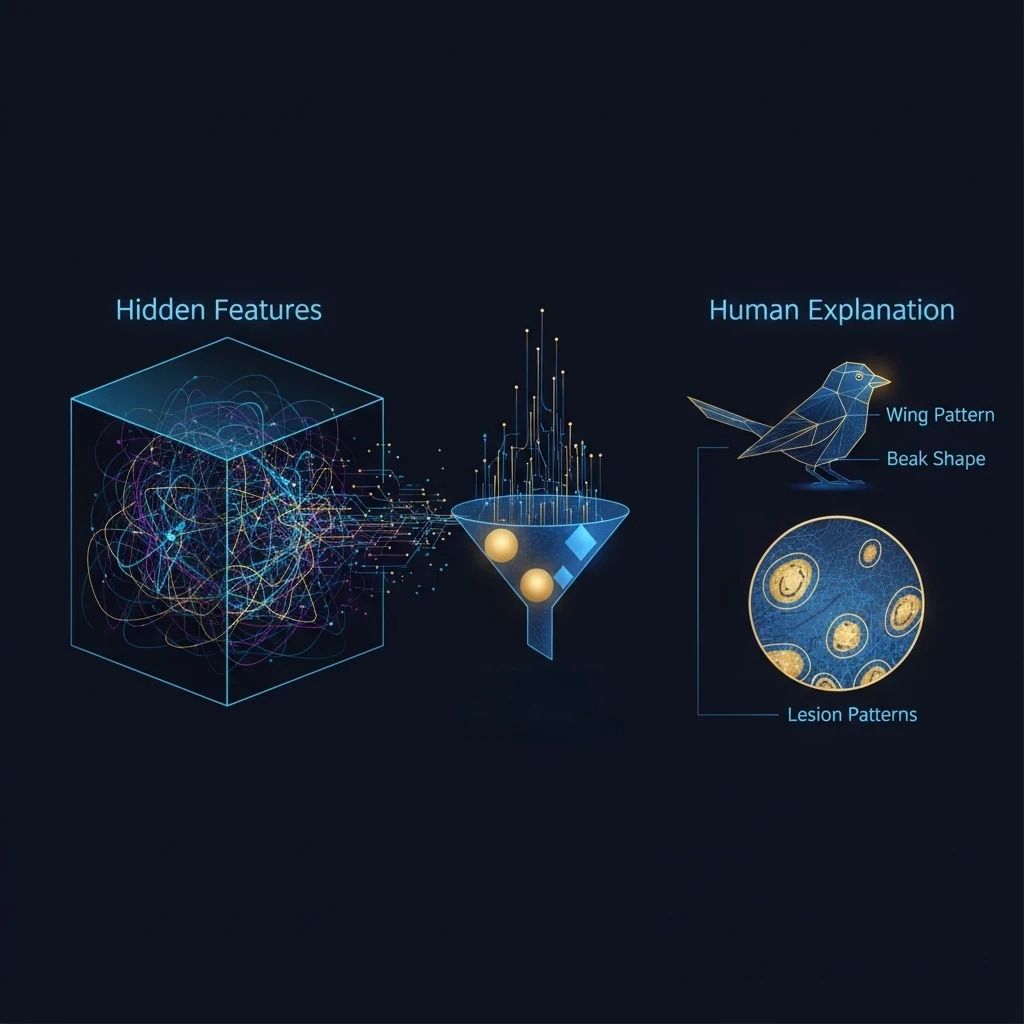

Researchers at MIT and the Polytechnic University of Milan developed a new framework that enables AI vision systems to explain their predictions in natural language. By extracting key internal features, translating them into human-understandable concepts, and constraining predictions to those concepts, the method improves both accuracy and transparency in tasks such as bird species recognition and skin lesion classification.

Researchers at MIT and the Polytechnic University of Milan have introduced a new method that helps AI vision systems explain their predictions in clear, human language, a crucial step for sensitive fields like health care and autonomous driving.

Instead of relying on expert-defined labels, the approach automatically discovers the most important internal features a model has already learned and converts them into understandable concepts. A sparse autoencoder compresses these features into a small set of core ideas, which a multimodal large language model then describes in natural language and uses to annotate images. A concept bottleneck module is trained on these annotations and integrated back into the original model, limiting each prediction to a handful of explicit concepts.

In tests on bird species recognition and skin lesion classification, this technique delivered both higher accuracy and clearer explanations than existing methods, enhancing transparency and accountability in black-box AI.

Reference: MIT News