Mar 29, 2026

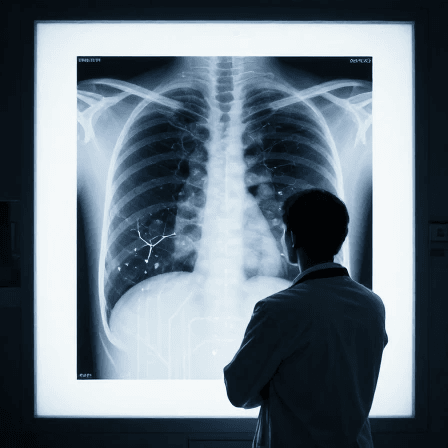

AI-Made X-rays Can Fool Doctors And Other AIs

A new study shows that AI-generated chest X‑rays are difficult for both radiologists and AI models to detect. Researchers warn that realistic medical deepfakes could contaminate diagnostic datasets, disrupt clinical workflows, and even be used to falsify evidence, highlighting the need for stronger safeguards and transparency around synthetic medical images.

A new study has revealed that even expert radiologists struggle to spot AI-generated chest X‑rays, raising urgent concerns about the integrity of medical imaging and research. Researchers used generative AI to create synthetic X‑rays and mixed them with real scans. Fewer than half of the fake images were correctly identified by radiologists and large language models performed just as poorly at telling real from fake.

The findings suggest that highly convincing medical deepfakes could slip into clinical workflows, corrupt training data for diagnostic AI systems, or even be used to falsify evidence in scientific papers and court cases. The authors call for stronger safeguards, better validation tools, and transparency whenever synthetic medical images are used.

Reference: Nature