Improving Seedling Count Accuracy with 3D Computer Vision

Discover how improving seedling count accuracy with 3D computer vision helps agribusinesses optimize yields. Learn how Sairone's AI enhances crop monitoring.

Written by Amirhossein

Reviewed by Boshra

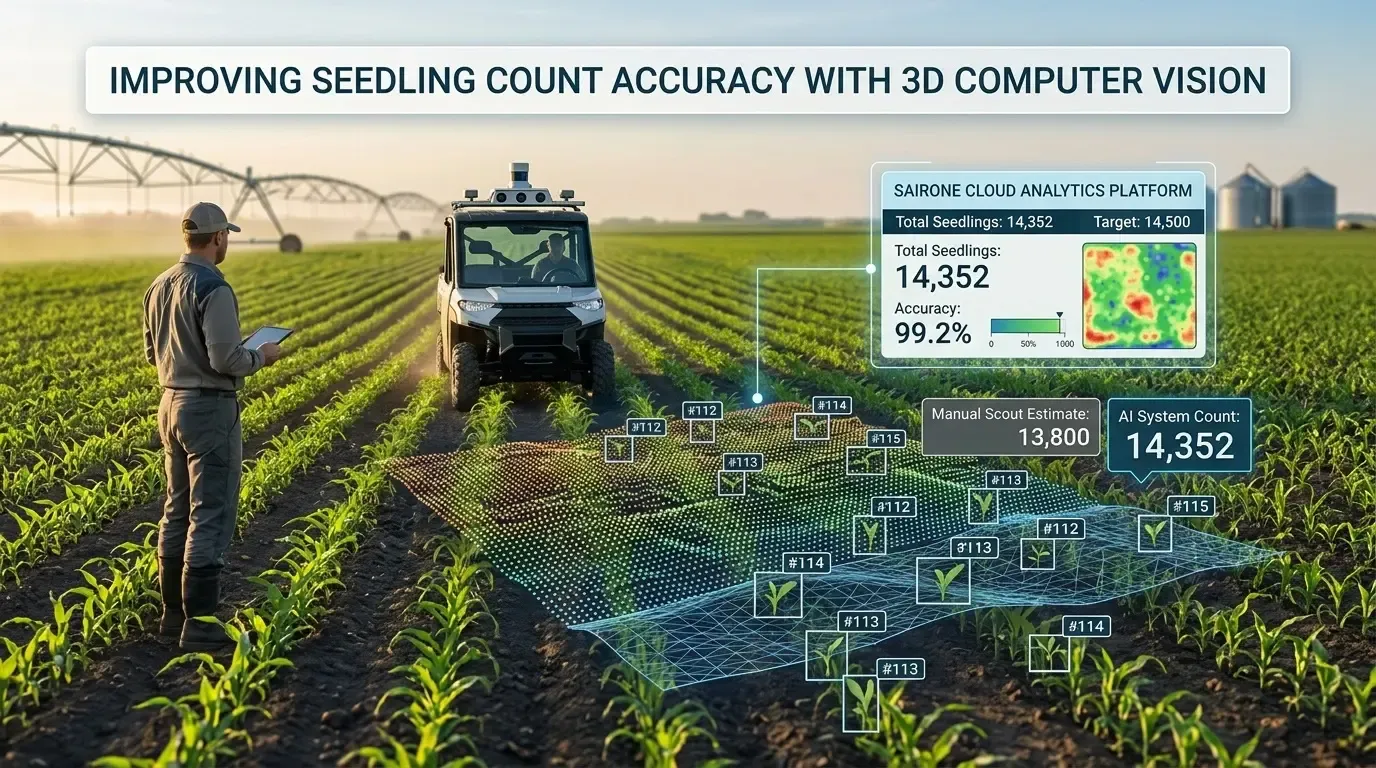

Agribusinesses and farm managers face a critical challenge early in the season: knowing exactly how many plants successfully emerged to make timely replanting and input decisions. Manual counting is slow, labor-intensive, and prone to sampling errors that distort field-wide estimates. This article explores the transition from traditional scouting methods to advanced AI systems, focusing specifically on improving seedling count accuracy with 3D computer vision. By reading this guide, you will understand how modern sensors, point cloud processing, and Sairone's cloud-based Computer vision platform can transform early-stage crop monitoring into a precise, data-driven workflow.

Understanding Seedling Counting in Precision Agriculture

Accurate emergence data sets the foundation for the entire growing season. Without knowing the exact plant population, agronomic models for yield prediction and input allocation fall apart. Precision agriculture relies on high-fidelity data, making early-stage plant counting a critical operational metric.

Why Seedling Count Accuracy Matters for Yield and Input Planning

The number of successfully established seedlings directly dictates the yield potential of a field. If plant density is too low, farmers must decide within a narrow time window whether to replant, a costly decision that requires precise data. Conversely, overpopulation can lead to resource competition, stunting crop growth. Accurate counts allow farm managers to optimize side-dress fertilizer applications, adjust irrigation schedules, and forecast harvest logistics with greater confidence.

Common Sources of Error in Manual and 2D Image-Based Seedling Counts

Human scouts typically count a small fraction of a field—often less than one percent—and extrapolate the results, which introduces massive statistical variance. When early automated systems adopted 2D cameras, they struggled with the physical realities of agriculture. 2D images compress a three-dimensional world into flat pixels, making it difficult for software to distinguish between two overlapping leaves and a single large leaf.

Field Conditions That Complicate Accurate Seedling Detection

Agricultural environments are chaotic and unpredictable. Several environmental factors routinely disrupt standard imaging:

- Heavy crop residue that visually blends with green seedlings.

- Extreme soil color variations caused by shifting moisture levels.

- Harsh, direct sunlight that casts deep shadows, altering the shape of the plants.

- Weed pressure that mimics the color and texture of the target crop.

Traditional Seedling Counting vs 3D Vision-Based Approaches

The agricultural industry is rapidly moving away from rudimentary counting methods as farms scale and labor becomes scarce. Transitioning to AI seedling counting precision agriculture solutions offers a massive leap in reliability, moving from rough estimates to exact digital inventories.

Manual Scouting, 2D Imaging, and Simple Thresholding Methods

Historically, automated counting relied on simple color thresholding—programming a computer to count green pixels against brown soil. This method fails when weeds are present or lighting changes. Standard 2D imaging improved upon this by using basic object detection, but it still lacks spatial context, leading to undercounting in dense rows where plants grow tightly together. Meanwhile, manual scouting remains highly prone to human error and relies on extrapolating small sample sizes to entire fields, limiting its accuracy.

Limitations of 2D Approaches in Overlapping Plants and Variable Illumination

When a tractor camera captures a top-down 2D image, overlapping canopies hide smaller seedlings underneath. Furthermore, variable illumination changes the RGB values of a plant from minute to minute as clouds pass. Because 2D cameras lack depth perception, they cannot use the physical height of a plant to differentiate it from a flat weed or a shadow.

Advantages of 3D Computer Vision for Occlusion Handling and Height-Based Separation

3D computer vision agriculture systems solve occlusion by adding a Z-axis (depth) to the data. By understanding the geometric structure of the scene, the software can separate overlapping plants based on their structural contours.

| Feature | 2D Imaging | 3D Computer Vision |

|---|---|---|

| Depth Perception | None | High |

| Overlapping Plants | Poor separation | Excellent separation |

| Shadow Interference | High | Minimal (depth ignores shadows) |

| Weed Differentiation | Relies entirely on color/shape | Uses structural height and volume |

Stereo Vision Cameras for Seedling Counting

Capturing three-dimensional data in an outdoor agricultural environment requires specialized hardware. Stereo vision for crop counting has emerged as a robust solution that mimics human depth perception to map field rows accurately.

Principles of Stereo Vision and Disparity Estimation in Crop Rows

Stereo cameras use two lenses spaced a set distance apart to capture two slightly different angles of the same crop row. The software calculates the "disparity"—the pixel shift between the left and right images. Objects closer to the lens shift more than objects far away. By measuring this disparity, the system calculates the exact distance to every leaf and soil clod, generating a topographic map of the seedling bed.

Camera Baseline, Resolution, and Field-of-View Considerations

The physical design of the stereo camera dictates its effectiveness in the field. The "baseline" (distance between the two lenses) determines depth accuracy; a wider baseline is better for objects farther away, while a narrow baseline is ideal for close-up seedling scanning. High resolution is necessary to capture thin cotyledon leaves, while a wide field-of-view ensures that the camera can capture multiple crop rows simultaneously as the tractor drives.

Typical Stereo Vision Pipelines for Seedling Detection in Row Crops

A standard stereo vision pipeline follows a sequential process to ensure accuracy. It begins with image rectification to align the left and right frames. Next, a stereo matching algorithm computes the disparity map. Finally, the disparity map is converted into a 3D point cloud, where each point represents a physical coordinate (X, Y, Z) on the plant or soil surface.

Point Cloud Processing for Seedling Detection

Once depth data is captured, it must be translated into a format that machine learning algorithms can analyze. Point cloud seedling detection transforms flat pixels into a measurable, interactable 3D space.

Generating Agricultural Point Clouds from Stereo and Depth Sensors

A point cloud is a digital twin of the field, consisting of millions of individual data points. Stereo cameras, LiDAR, or Time-of-Flight (ToF) depth sensors continuously fire data as the agricultural machinery moves, building a dense, three-dimensional representation of the crop canopy and the ground beneath it.

Filtering, Denoising, and Ground Plane Removal in Seedling Scenes

Raw point clouds are noisy due to dust, sensor artifacts, and tractor vibrations. Image processing filters remove these outlier points (denoising). To isolate the seedlings, algorithms perform "ground plane removal." By identifying the flat surface of the soil bed, the software deletes the ground points, leaving only the 3D clusters of the plants suspended in the digital space. Filtering algorithms also help to further refine the data, ensuring the isolated 3D clusters contain only the plants.

Clustering and Segmentation of Individual Seedlings in 3D Space

Once the ground is removed, the remaining data points must be grouped into individual plants. Algorithms like DBSCAN or Euclidean Cluster Extraction group neighboring points together based on physical proximity. Each distinct cluster is then segmented, measured, and counted as an individual seedling.

Object Detection Models for 3D Seedling Counting

The core intelligence behind modern counting systems lies in the deep learning architectures that interpret the spatial data. These models are trained to recognize the distinct geometric signatures of young plants.

2D Object Detection Models Adapted for 3D Context (YOLO, Faster R-CNN, Mask R-CNN)

Many systems utilize proven 2D deep learning models like YOLO (You Only Look Once) or Mask R-CNN, but feed them depth maps instead of standard photos. A depth map encodes the Z-axis as a grayscale image, allowing fast 2D object detectors to utilize spatial geometry without the heavy computational load of processing true 3D point clouds.

3D Deep Learning Architectures for Point Clouds (PointNet, PointNet++, Sparse CNNs)

For maximum accuracy, true 3D neural networks are required. Architectures like PointNet and PointNet++ consume raw, unordered point clouds directly. These models analyze the local geometric structures of the points, learning the physical shape of a corn or soybean seedling, making them highly resilient to changes in field color or lighting. Sparse CNNs are also employed to efficiently process the inherently sparse point cloud data, reducing computational overhead while maintaining high accuracy in 3D object detection.

Combining Object Detection with Instance Segmentation for Precise Seedling Counts

To achieve near-perfect counting, AI systems combine bounding-box object detection with instance segmentation. This hybrid approach first locates the general area of the seedling, then assigns a unique ID to every single pixel or data point belonging to that specific plant. This prevents the system from double-counting a single plant that has widespread leaves.

From Raw Sensor Data to Accurate Counts: Machine Vision and Image Processing

Hardware and algorithms must work together through a structured pipeline to deliver reliable results. Effective machine vision smooths out the chaotic variables of a living agricultural environment.

Preprocessing RGB and Depth Data for Robust Seedling Detection

Before the AI sees the data, preprocessing steps are vital. This includes normalizing image brightness, correcting lens distortion, and aligning the RGB color image perfectly with the depth map. If a color pixel does not perfectly match its corresponding depth coordinate, the AI model will become confused and fail to segment the plant accurately.

Fusing RGB, Depth, and Spectral Information to Improve Classification

The most robust systems perform "sensor fusion". By combining high-resolution RGB data (for texture and color), depth data (for structure and height), and multispectral imagery (to measure chlorophyll levels), the AI gains a comprehensive understanding of the plant. This makes distinguishing a living seedling from a green weed or a dead leaf incredibly accurate.

Handling Shadows, Soil Variability, and Residue in Machine Vision Pipelines

Machine vision pipelines use depth data to ignore shadows, which have color but no physical height. Soil variability is handled by training the deep learning models on highly diverse datasets representing different geographies and moisture levels. Residue is filtered out by programming the AI to ignore horizontal geometric structures that do not match the upright growth habit of a seedling.

Sairone as the Machine Vision and AI Layer for Seedling Counting

Building an in-house computer vision pipeline is resource-intensive and often impractical for farm managers and agritech integrators. Sairone, a product of Saiwa, provides the essential AI layer needed to process complex agricultural imagery without requiring custom software development.

End-to-End Detection, Segmentation, and Counting Pipelines for Seedlings

Sairone supports AI-based workflows for seedling detection, counting, and stand assessment using agricultural imagery. Its machine vision capabilities help agribusinesses process field data more efficiently and turn raw visual inputs into actionable plant population insights. Rather than building and maintaining a custom analytics pipeline internally, teams can use Sairone to streamline early-stage crop monitoring and focus on stand quality, emergence patterns, and decision-ready outputs.

Machine Learning on Images and Point Clouds to Improve Seedling Count Accuracy

Saiwa's team continuously trains the machine learning models on diverse, multi-regional datasets. Whether analyzing RGB video feeds from a tractor boom or orthophotos from a drone, the models are trained to adapt to the specific morphological traits of different crop types. This continuous learning loop ensures that improving seedling count accuracy is an ongoing, scalable process.

Cloud-Based Software Applications for Large-Scale Field Data Processing

Processing gigabytes of 3D point clouds requires massive computing power. Sairone provides cloud-based SaaS services that perform heavy computational lifting off-site. Farm managers simply upload their drone or tractor sensor data, and the Sairone platform processes the imagery, returning accurate emergence maps and population counts to a user-friendly dashboard.

Integrating Sairone Outputs with Planters, Sprayers, and Farm Management Systems

Data is only valuable if it drives action. Sairone’s platforms are designed for seamless B2B integration. The resulting seedling count data and spatial maps can be exported directly into existing Farm Management Information Systems (FMIS). This interoperability allows technical service providers to instantly generate variable-rate prescriptions for targeted replanting or precise agrochemical applications.

Comments

No comments yet!

Table of Contents

No headings were found on this page.