Today, there are an increasing number of images available. Finding the exact image of interest, however, is a difficult process for the normal user. Over the last two decades, a significant amount of image retrieval research has been done. Traditionally, the research in this field has concentrated on content-based image retrieval. Recent researches, however, indicate that there is a semantic gap between content-based image retrieval and human-understandable image semantics.

As a result, the focus of research in this field has shifted to bridging the semantic gap between low-level image data and high-level semantics. Image annotation, which extracts data using machine learning techniques, is a common way to bridge the semantic gap. In this article, we focus on topics and principles related to image annotation techniques.

Types of Image Annotation

There are 4 main types of image annotation:

Classification

A particular kind of image annotation called classification involves finding instances of the same items depicted in pictures all throughout a data set. This kind of annotation is used to teach a computer to identify an object in an unlabeled image that matches an object in other previously trained, already labelled images.

As an illustration, an annotator can categorize interior photographs using the tags kitchen, living room, etc. Also, he can label outdoor photos by deciding whether it is "day" or "night."

Object detection

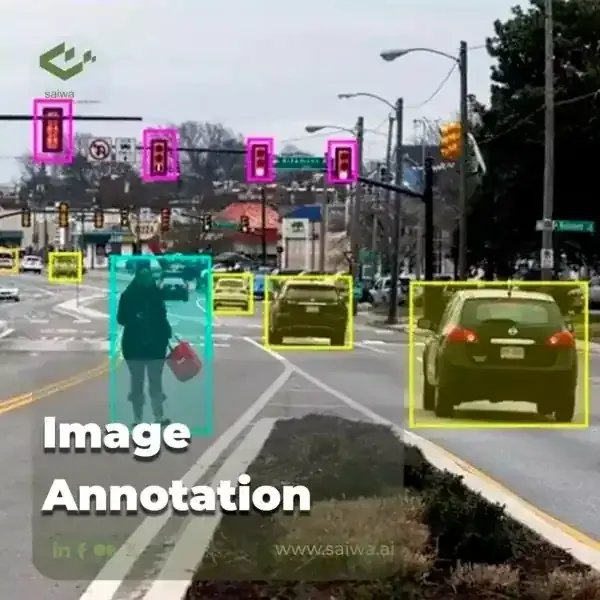

Detecting the presence, location, and number of one or more items in an image and accurately labeling them are the goals of object detection, a type of annotation. Techniques like polygon annotation and bounding box annotation can be used to annotate objects in an image. For instance, we might have multiple pictures of street scenes where we wish to recognize automobiles, trucks, bicycles, or pedestrians. Thanks to object detection, you can annotate them individually on the same image.

Semantic segmentation

A sophisticated use of image annotation is semantic segmentation. It can be applied in a variety of ways to examine the visual elements of photos and identify similarities and differences between items. When we want to comprehend the presence, location, and occasionally the size and/or shape of things in photographs, we apply this technique.

It is feasible to annotate the crowd to segment the stadium seats, for instance, if we have numerous photographs that include a stadium and a crowd that we wish to annotate.

Deep learning image segmentation makes it possible to track and count the presence, position, number, size and shape of objects in an image. Using the previous example of the stadium and the crowd, it is possible to annotate both the individuals in the stadium and to determine the number of people in the crowd using this type of annotation and using per-pixel segmentation.

Boundary recognition

Moreover, image annotation can be used to teach computers how to recognize the edges or lines of objects in an image. The margins of each individual object or the region of topography visible in the photograph can serve as these boundaries. The training of autonomous vehicles makes advantage of this kind of annotation. For instance, it's used to identify the limits of sidewalks, lanes of traffic, etc. In situations when it's crucial for drones to follow a specific course or recognize potential obstructions like power lines, it can also be utilized to train machine learning models for drones.

What is Image Annotation?

Image annotation is the process of adding a layer of metadata to an image. It's a technique that allows individuals to explain what they understand in an image, and that information may be utilized for a variety of purposes. For example, this could help with the identification of things in an image or offer further context about them. It can also give helpful information about how those things are spatially or temporally connected.

Image annotation is important in computer vision, a technology that allows computers to obtain high-level comprehension from digital images or videos and see and interpret visual information in the same way that people do.

Why is image annotation services necessary?

The main requirement for effective computer vision applications and the delivery of commercial value is image annotation services. In fact, labeling gives the AI model the knowledge that will be included into it during model training. The most effective way to solve extremely complicated business challenges is still to use supervised learning based on image annotation, notwithstanding the advancements in other computer vision domains (such as unsupervised learning).

For image annotation, what do you need?

Start your image annotation work by gathering these 4 essential components. A wide collection of photographs that accurately depict reality, skilled annotation personnel, an appropriate annotation platform, and a project management methodology are the four requirements.

Diverse images

In order for the model to act correctly, it is essential that the images it must label are diverse. If you're aiming to detect vehicles and motorbikes on road photos, your model needs to have been trained on a sizable number of each of these categories. This is the first diversity to reach. In order to cover all probable scenarios that the model may face in the future, variety within the class is also crucial. For instance, it will be crucial to have category diversity (cars, but also trucks, motorbikes…) and exterior condition diversity (day, sun, rain...) when detecting road vehicles from CCTV.

Proper annotation platform

An appropriate instrument is essential for completing a labeling project quickly and effectively. There are numerous options, ranging from internal development to open source to enterprise platforms. For the selection, it's crucial to consider the following factors:

Productivity

Quality

Project management

Security

Trained annotators

Depending on the requirements of the project and the difficulty of the underlying task, trained annotators will perform the work, either internally or externally. Then, at the start of a project, it is crucial to teach the workforce; this requires using thorough annotation standards that include examples and describe edge cases. To ensure that the labeling team has the right grasp of the work, a test/train project might be employed with a review stage.

Project management process

A specialized approach must be used to manage the hard work of labeling projects. The team, the requirements, the work distribution, the use of manual or automated labeling, and quality control all need to be taken into account during this procedure.

image annotation shapes

Different tasks necessitate the annotation of data in various shapes so that the data obtained may be utilized immediately for training. Below, we've included a list of the many annotation shapes used for these tasks.

Bounding Box Annotation

Drawing a bounding box around an item in an image, such as an orange or a car, is one technique for annotating and labeling things. With bounding box annotation , you can draw arbitrary borders around any item and then label it.

Polygon Annotation

The boundaries of an object in a frame are precisely labeled, allowing the object to be recognized with the appropriate size and shape. Polygon annotation is commonly used to recognize objects such as street signs, brand images, and facial recognition.

Cuboid Annotation

This 3D annotation technique utilizes high-quality labeling and marking to emphasize 3D drawing shapes. It is frequently employed in architecture and medical imaging to detect the depth or distance of objects from structures like buildings or cars and define space and volume.

Semantic Segmentation

This one, also known as image segmentation, organizes areas of an image that belong to the same object class. To construct a pixel-level forecast, pixels inside an image are classified.

What is Automatic Online Image Annotation?

The technique of automatically annotating images without human intervention is called automated image annotation. This approach uses intelligent, AI-driven software to quickly identify patterns in existing datasets and annotate vast amounts of previously unexplored data.

The two primary processes in automated online image annotation are typically feature extraction and classification. During feature extraction, the AI-powered software learns patterns and representations, including the shapes, sizes, characteristics, colors and content of the image, as well as the association between these images and their associated output, or annotations, which is the output in this case.

It can perform the classification operation once the patterns or features have been retrieved. The classification process effectively labels the image depending on the features that have been extracted.

Automatic online image annotation applications

Many tasks related to image retrieval, content-based image retrieval and image indexing can benefit from automatic online image annotation. Other applications include medical imaging, medicine, surveillance and multimedia. Let's talk about each of these applications briefly.

Image retrieval

This method involves learning the visual characteristics of the image, such as color, texture and shape, and then retrieving images by assigning an appropriate tag to each image based on what it contains. A user uploads a query image, which is then compared to the database using similarity metrics to perform an image search. The user is presented with search results that include the most comparable photographs in the database.

Photo Apps

The use of automated online image annotation in photo applications is one of the most fascinating and common applications of this technology. Essentially, these applications begin to automatically categories images based on person, location, environment, time, effects, filters, etc. as soon as a user takes a photo and uploads it to the application. In the case of a person, the person must first be manually tagged, after which the software will automatically identify and tag the person.

Social media

A similar framework to the photo app is used by social networking sites. Large datasets are also used to train social media algorithms so that users can search and recognize photos. Facebook, for example, automatically tags images based on their content. Hashtags are used by Instagram and Twitter to search and retrieve photos.

Medical imaging

Automated online image annotation can be used to identify specific aspects of X-ray or MRI images and help medical professionals diagnose patients.

Electronic commerce

In e-commerce, automated online image annotation can be used to help shoppers find items based on visual characteristics. For example, an online clothing retailer might use automated online image annotation to help customers find clothing products with specific patterns or colors.

Image annotation applications

To assemble a full list of available applications that use image annotation, you'd have to read through hundreds of pages. For now, we'll highlight some of the most remarkable application cases from major industries.

Autonomous Vehicles

Machine learning algorithms for self-driving cars must be able to recognize road signs, traffic signals, bike lanes, and other possible road conditions, such as inclement weather. Annotation of images is widespread in a variety of applications, including advanced driving assistance systems, navigation and steering response, road item and size identification, and movement observations, such as with pedestrians.

Agriculture

Farmers are also getting in on the action. Image annotation aids in the creation of content-driven data labeling, which helps to decrease human harm and safeguard crops. Using drones and satellite photos, AI can be used for a variety of purposes, including crop yield estimation, soil evaluation, and more.

Healthcare

AI-powered solutions are supplementing doctors' diagnoses. For example, AI can analyze radiological images to determine the probability of the presence of specific tumors. In one case, researchers used hundreds of images tagged with malignant and non-cancerous spots to train a model until the machine could learn to discriminate on its own.

Monitoring and security

Security cameras can be seen everywhere these days, and organizations are investing heavily in monitoring systems to prevent robbery, vandalism, and accidents. Image annotation is utilized in crowd detection, nighttime and thermal vision, traffic movements and tracking, human tracking, and face recognition. Machine learning specialists can use annotated images to develop algorithms for surveillance and security devices to create a much safer environment.

Main Image Annotation Problems in Machine Learning

While there are several advantages to applying image annotation, practitioners confront several main problems with this technique in the machine learning system.

Choosing the Best Annotation Tools

ML systems must be trained to distinguish elements inside digital visual images in the same manner that people do. Organizations need to know the aspects of the data types they want to utilize for data labeling, and they will require the correct combination of digital annotation tools and a staff that understands how to use them.

Assuring the Quality of Data Results

Machine learning business models rely heavily on high-quality data results; however, those ML models can only make accurate forecasts if the data quality is reliable. Subjective data, for example, might be hard for visual labelers to interpret depending on where they are physically located.

Switching between automatic system and human annotation

Using human resources to perform image annotation rather than automated technologies might take longer and cost more money in terms of locating the right engineers with the relevant skill sets. Digital annotation conducted with electronic tools improves accuracy and consistency.

Image Annotation at Saiwa

In machine vision applications, there are three types of annotations: 1. classification; 2. object detection; and 3. image segmentation. Saiwa provides three annotation services that support these three annotation types: classification annotation, bounding box annotation, and boundary annotation. Each sort of annotation is described here, along with the properties of the corresponding saiwa services.

Classification

This approach is a whole-image annotation that simply identifies attributes in the input images. Each image has a single label (in classification applications, this label is usually called a tag). Whenever a machine learning model is trained to detect similarities between unlabeled images and known annotated images, this sort of annotation is utilized. Texture classification, medical diagnosis, defect identification, scene recognition, and other applications are all possible using image classification. Classification annotation is the simplest and quickest sort of annotation.

Object detection

Object detection annotation is involved with finding and locating things of interest in an image. In this case, the annotator should include tools for drawing bounding boxes around all instances of objects in addition to labels. The most prevalent type of annotation is the bounding box annotation. The placement of the label is an extra parameter in this case, whereas, in image classification, the whole image is labeled as one class.

image segmentation

Image segmentation, in which objects are detected at the pixel level, is the most complex type of object targeting. For unannotated images, the boundaries of objects inside an image are identified and later discovered. The items' edges may be uneven. In comparison to other annotation kinds, this sort of annotation is slower but more accurate.

The features of the Saiwa image annotation service:

Provide the three most typical types of annotations.

For complex boundaries, an interactive interface with a few clicks is required.

export in standard annotation formats (i.e., txt and JSON).

Labels with diverse, overlapping, and fine-granularity

Results can be exported and archived in the user's cloud or locally.

The Saiwa team can customise services by using the "Request for Customization" option.

View and save the annotated images.

FAQ

Most frequent questions and answers

What is image annotation?

Image annotation is the process of adding metadata or labels to an image that provides additional information about its contents. This metadata can include various types of information, such as object bounding boxes, segmentation masks, keypoints, and semantic labels.

Why is image annotation important?

Image annotation is essential for various computer vision tasks, such as object detection, segmentation, and recognition. These tasks rely on annotated data to train machine learning algorithms, which can then be used to automatically analyze and understand images.

What are some types of image annotations?

There are several types of image annotations, including bounding boxes, semantic segmentation, instance segmentation, keypoints, and text annotations. Bounding boxes are rectangles that enclose an object of interest, while semantic segmentation involves labeling each pixel in an image with a semantic category. Instance segmentation is similar to semantic segmentation but distinguishes between individual instances of objects. Keypoints are specific points on an object, and text annotations provide additional information about an image.

How is image annotation performed?

Image annotation can be performed manually by human annotators or automatically by computer algorithms. Manual annotation involves a person drawing bounding boxes, polygons, or other annotations on an image using specialized software. Automatic annotation can use computer vision algorithms to detect and label objects in an image.

What are some challenges of image annotation?

One of the main challenges of image annotation is ensuring the accuracy and consistency of annotations. This requires training and monitoring annotators, as well as developing quality control measures to catch errors. Another challenge is dealing with the large amount of data involved in image annotation, as annotating large datasets can be time-consuming and expensive. Additionally, some types of annotations, such as semantic segmentation, can be difficult and subjective to perform.

How can image annotation benefit businesses?

Image annotation can benefit businesses in various ways, such as improving the accuracy of computer vision algorithms, reducing the time and cost of manual analysis, and enabling new applications and services. For example, image annotation can be used in e-commerce to automatically detect and label products in images, improving search results and recommendations. It can also be used in healthcare to automatically analyze medical images, improving diagnosis and treatment.

Note: Some visuals on this blog post were generated using AI tools.