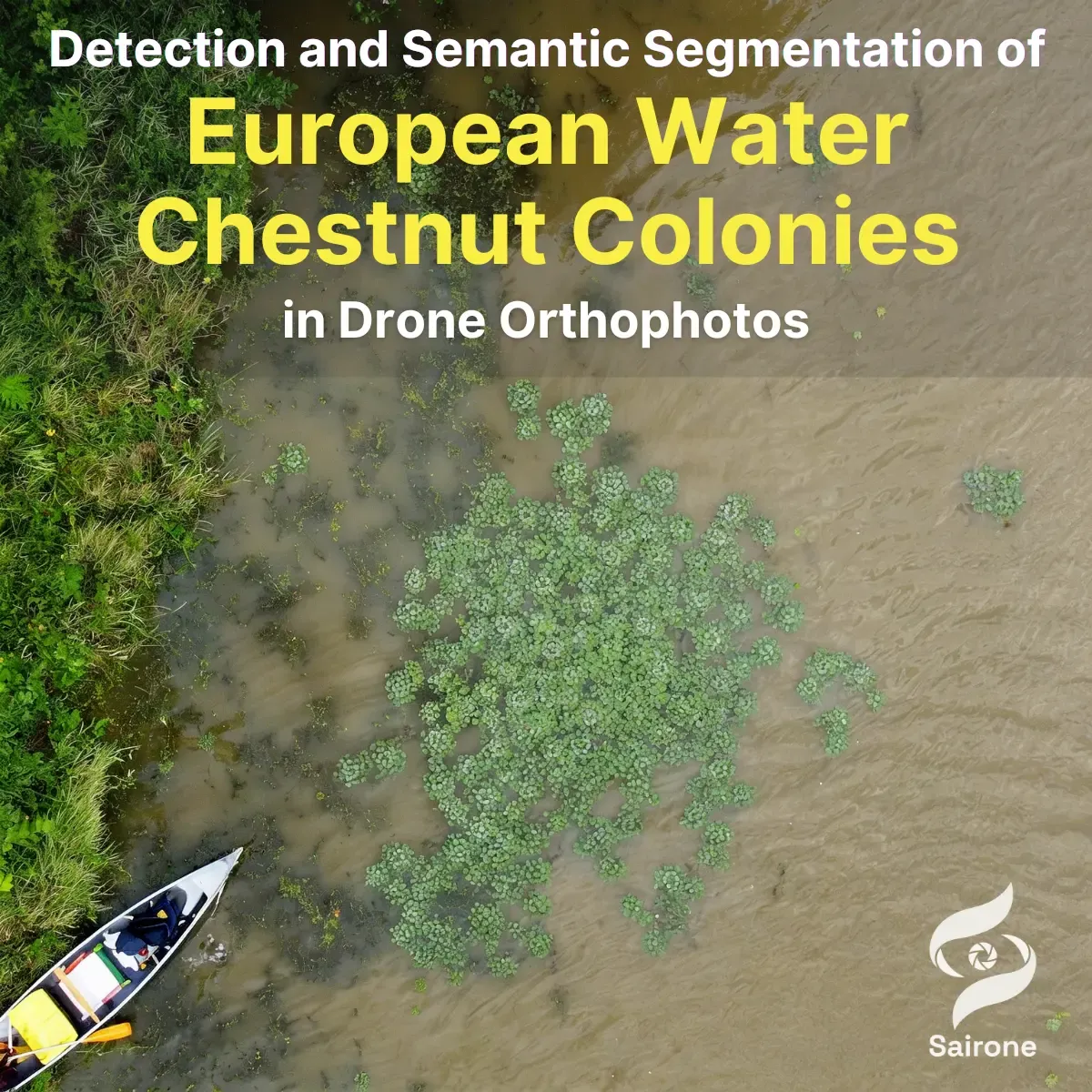

Detection and Semantic Segmentation of European Water Chestnut Colonies in Drone Orthophotos

Status: Development (Started in Thu Jan 01 2026)

In 2026, Saiwa continues its collaboration with Ducks Unlimited Canada (DUC) to advance drone-based monitoring of invasive aquatic vegetation. Building on previous phases focused on segmentation accuracy and geospatial localization, this phase introduces enhanced pixel-level semantic segmentation of European Water Chestnut (EWC) to enable detailed mapping of both individual plants and dense colonies across diverse aquatic environments.

About Ducks Unlimited Canada (DUC)

Ducks Unlimited Canada is a leading conservation organization dedicated to protecting and restoring wetlands and associated ecosystems across Canada. Through science-driven habitat management and strategic partnerships, DUC advances innovative monitoring solutions for sustainable environmental stewardship.

Overview

In earlier phases of the project, Saiwa developed a robust deep learning pipeline capable of segmenting European Water Chestnut with high accuracy under varying environmental conditions. Incremental learning techniques improved generalization to new plant appearances, while subsequent system enhancements enabled conversion of segmentation outputs into geographic coordinates and GIS-compatible formats.

However, earlier segmentation approaches exhibit limitations in capturing fine structural details, particularly in dense growth regions where multiple plants merge into contiguous clusters. In such cases, boundary ambiguity and limited contextual awareness can reduce the precision of colony delineation.

The 2026 phase addresses these limitations by advancing toward a more context-aware semantic segmentation framework, enabling more precise and coherent delineation of both individual plants and colonies at the pixel level.

Problem Statement

While previous segmentation models achieved strong performance in identifying EWC regions, they remain limited in their ability to accurately represent complex spatial structures required for detailed ecological analysis and intervention planning.

Key challenges include:

- Boundary ambiguity in dense colonies: Overlapping leaves and tightly packed vegetation create indistinct borders between individual plants

- Loss of structural clarity: Coarse or locally constrained representations may fail to capture colony shape, density, and spatial continuity

A more advanced segmentation approach is required to accurately model the spatial distribution and morphology of EWC infestations.

Project Goals

The primary objectives of this phase are:

- Develop an improved semantic segmentation framework to identify EWC at the pixel level

- Accurately delineate both individual plants and dense colonies

- Preserve fine spatial details to support ecological analysis and monitoring

- Utilize previously collected annotations alongside newly labeled colony data

- Generate GIS-ready outputs compatible with existing monitoring workflows

Solution Approach

1. Tile-Based Semantic Segmentation

Large orthomosaic TIFF images are divided into manageable tiles for processing. A deep learning–based semantic segmentation model is trained to classify each pixel as either EWC or background, enabling continuous spatial representation across entire scenes.

2. Training Data Strategy

The training dataset combines:

- High-quality annotations from previous segmentation phases

- Newly generated segmentation masks capturing colony-level structures

This hybrid labeling approach ensures both consistency with earlier datasets and improved representation of complex vegetation patterns.

3. Context-Aware Boundary Refinement

Special attention is given to regions with dense vegetation where plant boundaries are ambiguous. The model is optimized to better capture:

- Smooth transitions between adjacent plants

- Fine-grained edges within colonies

- Structural continuity across dense patches

Compared to earlier convolutional segmentation approaches, the adopted architecture provides improved modeling of long-range spatial dependencies and global context. This results in more coherent segmentation maps, reduced fragmentation, and more accurate boundary delineation in complex scenes.

4. Post-Processing and Geospatial Integration

Segmentation outputs are converted into GIS-compatible formats, enabling direct integration with mapping and monitoring tools. This includes:

- Transformation of pixel-level masks into georeferenced spatial data

- Export into standard GIS formats (e.g., shapefiles)

- Alignment with previously developed coordinate conversion pipelines

Expected Impact

The transition to a more advanced semantic segmentation framework significantly enhances the analytical value of the system. By capturing the full spatial extent and structure of EWC infestations, this phase enables:

- More accurate estimation of vegetation coverage and density

- Improved identification of colony boundaries and growth patterns

- Enhanced support for targeted removal and intervention strategies

- Seamless integration into geospatial analysis platforms

Additionally, the improved segmentation approach provides stronger generalization across diverse environmental conditions and more stable performance on large-scale imagery compared to earlier methods.

Contact

For technical details, implementation inquiries, or collaboration opportunities, please contact us via info@saiwa.ai or through the official Saiwa communication channels.